Micron Introduces High-Density Memory for AI Workloads

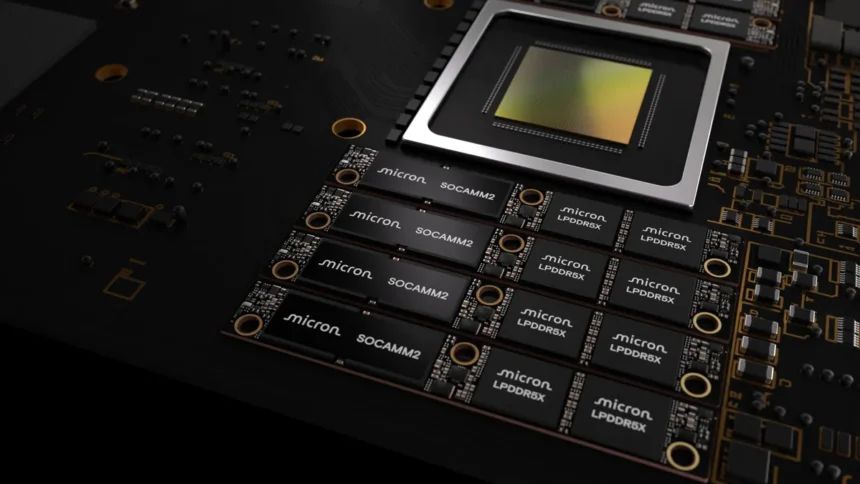

Large language models and modern AI inference pipelines demand vast memory pools, prompting hardware makers to redesign server architectures. Micron now offers a 256GB SOCAMM2 memory module tailored for data centers, balancing capacity, bandwidth, and power efficiency.

This module packs 64 monolithic 32GB LPDDR5x chips into a compact LPDRAM package, meeting the expanding needs of AI applications.

Boosting Server Memory to 2TB

The design elevates maximum memory per processor setup. Eight SOCAMM2 modules in an eight-channel server CPU deliver 2TB of LPDRAM total—a 33% jump over prior 192GB modules. This supports larger context windows and intensive inference tasks.

Power Efficiency and Compact Design

The SOCAMM2 outperforms traditional server memory. “Micron’s 256GB SOCAMM2 offering enables the most power-efficient CPU-attached memory solution for both AI and HPC,” stated Raj Narasimhan, senior vice president and general manager of Micron’s Cloud Memory Business Unit. “Our continued leadership in low-power memory solutions for data center applications has uniquely positioned us to be the first to deliver a 32Gb monolithic LPDRAM die, helping drive industry adoption of more power-efficient, high-capacity system architectures.”

It uses about one-third the power of equivalent RDIMMs and takes one-third the space, enabling denser racks, lower thermal loads, and reduced infrastructure costs in data centers.

The modular SOCAMM2 suits liquid-cooled servers and eases maintenance and upgrades as AI models grow more complex.

Performance Gains in AI Inference

In unified memory setups, the module speeds key value cache offloading, yielding over 2.3x faster time-to-first-token in long-context inference. Standalone CPU tasks see more than 3x better performance per watt versus standard modules.

Micron’s LPDRAM lineup includes components from 8GB to 64GB and SOCAMM2 modules from 48GB to 256GB. Customer samples of the 256GB version ship now.

![[Vantage Point] SEC’s time period limits on dealer administrators: Why it issues [Vantage Point] SEC’s time period limits on dealer administrators: Why it issues](https://www.rappler.com/tachyon/2026/03/Vantage-Point-SEC-broker-director-limits-March-9-2026.jpg)