[ad_1]

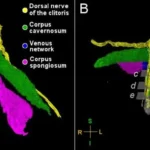

In one paper Eleos AI revealed, the nonprofit argues for evaluating AI consciousness utilizing a “computational functionalism” strategy. An analogous concept was as soon as championed by none apart from Putnam, although he criticized it later in his profession. The principle suggests that human minds will be considered particular sorts of computational methods. From there, you may then work out if different computational methods, akin to a chabot, have indicators of sentience much like these of a human.

Eleos AI mentioned within the paper that “a serious problem in making use of” this strategy “is that it includes important judgment calls, each in formulating the symptoms and in evaluating their presence or absence in AI methods.”

Mannequin welfare is, in fact, a nascent and nonetheless evolving subject. It’s obtained loads of critics, together with Mustafa Suleyman, the CEO of Microsoft AI, who not too long ago revealed a weblog about “seemingly acutely aware AI.”

“That is each untimely, and albeit harmful,” Suleyman wrote, referring typically to the sector of mannequin welfare analysis. “All of it will exacerbate delusions, create but extra dependence-related issues, prey on our psychological vulnerabilities, introduce new dimensions of polarization, complicate present struggles for rights, and create an enormous new class error for society.”

Suleyman wrote that “there’s zero proof” right now that acutely aware AI exists. He included a hyperlink to a paper that Lengthy coauthored in 2023 that proposed a brand new framework for evaluating whether or not an AI system has “indicator properties” of consciousness. (Suleyman didn’t reply to a request for remark from WIRED.)

I chatted with Lengthy and Campbell shortly after Suleyman revealed his weblog. They informed me that, whereas they agreed with a lot of what he mentioned, they don’t imagine mannequin welfare analysis ought to stop to exist. Moderately, they argue that the harms Suleyman referenced are the precise causes why they wish to research the subject within the first place.

“When you will have a giant, complicated downside or query, the one method to assure you are not going to unravel it’s to throw your arms up and be like ‘Oh wow, that is too difficult,’” Campbell says. “I believe we must always at the very least strive.”

Testing Consciousness

Mannequin welfare researchers primarily concern themselves with questions of consciousness. If we are able to show that you just and I are acutely aware, they argue, then the identical logic might be utilized to massive language fashions. To be clear, neither Lengthy nor Campbell assume that AI is acutely aware right now, they usually additionally aren’t positive it ever can be. However they wish to develop checks that will permit us to show it.

“The delusions are from people who find themselves involved with the precise query, ‘Is that this AI, acutely aware?’ and having a scientific framework for eager about that, I believe, is simply robustly good,” Lengthy says.

However in a world the place AI analysis will be packaged into sensational headlines and social media movies, heady philosophical questions and mind-bending experiments can simply be misconstrued. Take what occurred when Anthropic revealed a security report that confirmed Claude Opus 4 might take “dangerous actions” in excessive circumstances, like blackmailing a fictional engineer to stop it from being shut off.

[ad_2]